Kahlert School of Computing Faculty and Students to Present at CHI 2024 in Honolulu

The Kahlert School of Computing is gearing up for the CHI 2024 conference on the island of Oʻahu, Hawaiʻi from May 11-16th. Regarded as the premier global event for Human-Computer Interaction, the ACM CHI Conference on Human Factors in Computing Systems unites industry and academic experts to explore avenues for advancing and refining cutting-edge technologies.

The Kahlert School of Computing is proud to be represented by several students and faculty members presenting research at the conference, including: PhD students Joshua Dawson, Eden Fisher, Maxim Lisnic, and faculty members Paul Rosen, Alexander Lex, Marina Kogan, and Jason Wiese.

The works that will be presented by Kahlert School of Computing faculty and students are:

Hospital Employee Experiences Caring for Patients in Smart Patient Rooms

Authors: Joshua Dawson, Eden Fisher, Jason Wiese

This work sheds light on Hospital employees’ current use of smart Hospital room technology with thematic analysis of interviews, highlighting benefits, drawbacks, and potential areas for improvement.

"Yeah, this graph doesn't show that": Analysis of Online Engagement with Misleading Data Visualizations

Authors: Maxim Lisnic, Alexander Lex, Marina Kogan

This investigation analyzes the public's engagement with data visualization posts about COVID-19 on Twitter, discussing opportunities and limitations to effectively leveraging crowdsourced assessments to address data-driven misinformation.

Do You See What I See? A Qualitative Study Eliciting High-Level Visualiz ation Comprehension

Authors: Paul Rosen, Ghulam Jilani Quadri (University of North Carolina), Zhehao Wang (University of North Carolina at Chapel Hill), Arran Zeyu Wang (University of North Carolina-Chapel Hill), Jennifer Adorno (University of South Florida), Danielle Albers Szafir (University of North Carolina-Chapel Hill)

This work explores how viewers interpret visualizations in alignment with designers' communicative goals, contrasting with traditional studies focused on isolated tasks. It contains an investigation into the natural comprehension of high-level patterns in visualizations, revealing discrepancies between stated objectives and audience understanding across different chart types.

In addition, Kahlert School of Computing Associate Professor, Katherine E Isaacs will be one of the chairs for the workshop Human-Notebook Interactions: The CHI of Computational Notebooks. The workshop aims to unite researchers from the CHI community to foster collaboration at the intersection of computational notebook and HCI research, focusing on the effective design and use of interfaces within computational notebook environments.

Amazon awards $50K to Prof. Anton Burtsev to Develop Atmosphere, a Secure and Reliable Operating System

Kahlert School of Computing Assistant Professor Anton Burtsev is a recipient of the 2023 Amazon Research Award for his research aimed at developing a new operating system, Atmosphere, that utilizes advances in programming languages and formal verification for constructing a formally verified operating system kernel. The $50K grant is one of 28 selected in the Automated Reasoning category.

“A grant from Amazon illustrates the potential of Prof. Burtsev’s research for large-scale, practical impact on the challenges faced by industry,” said Prof. Mary Hall, Director of the Kahlert School of Computing.

The exclusive authority of an operating system kernel makes it an attractive target for security attacks. Given the reliance on the large trusted computing base of the kernel, the entire system is at risk without the secure foundation provided by a kernel, even if application logic is verified. With the $50K grant from Amazon, Prof. Burtsev will address the challenge of developing an operating system, called Atmosphere, that is provably secure and reliable. Today, lacking security guarantees, industry-standard kernels make nearly every current computer system on the planet vulnerable to attacks that attempt to exploit these problems.

Modern operating system kernels are inherently complex. A typical kernel must adhere to multiple allocation, synchronization, access control, and object lifetime conventions. As a result, the code for the kernel is routinely affected by the introduction of bugs and vulnerabilities. The root causes of these vulnerabilities include logical and design errors related to low-level reasoning about intricate details of object lifetimes and synchronization, integer and buffer overflows, improper memory remapping, improper handling of uninitialized and freed data, missing pointer and permission checks, division by zero, infinite loops, and data races.

To address these challenges, Atmosphere leverages recent advances in the field of automated formal verification. Atmosphere will be developed in a safe language, Rust, that provides the foundation for lightweight, fine-grained isolation of traditionally monolithic kernel subsystems. Lightweight isolation enables new compartmentalized kernel organization that creates the foundation for modular verification. The system is structured as a collection of isolated subsystems that do not share state. The simplification of the verification of programming languages in Atmosphere is centered around the idea of restricted ownership discipline implemented by Rust. To that end, Atmosphere leverages a recent verification toolchain, Verus, that implements Dafny-like verification for Rust.

The ability to develop verified code at a speed that is comparable to traditional non-verified development enables a new level of trust in the software we use on a daily basis. "It took 20 person-years to develop the first fully-verified microkernel, seL4. We hope, however, that advances in automated verification would allow us to build a similar system in only a fraction of the time," Prof. Burtsev said.

Researchers Demonstrate the First Autonomous Medical Robot That Steers Needles In Vivo

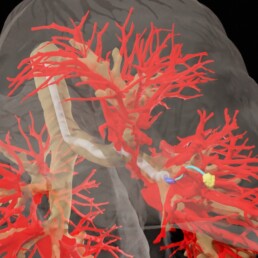

To safely operate, an autonomous vehicle needs to be able to recognize and avoid obstacles in real time. Even a momentary lapse could lead to disastrous consequences, especially if the vehicle is a medical needle, less than a millimeter wide, navigating through the cluttered tissue of a patient’s lungs en route to a cancerous nodule.

Safely and accurately reaching a site inside living tissue is currently often challenging for physicians, especially inside complex, moving anatomy like the lung. Errors can be fatal: the failure to precisely reach a suspicious nodule in the lung can result in an inaccurate diagnosis and hence allow cancer to spread untreated. An autonomous robot has the potential to exceed the accuracy that a human operator can safely accomplish using currently available tools.

A team of researchers from the University of Utah’s John and Marcia Price College of Engineering, University of North Carolina at Chapel Hill, and Vanderbilt University have demonstrated, for the first time, a robotic needle capable of autonomously maneuvering around obstacles to a target in living tissue.

A New Technique by Kahlert School Researchers Paves the Way for Enhancing Training Efficiency for Physics-Informed Neural Networks

Kahlert School of Computing researchers Ramansh Sharma, an incoming PhD student, and Dr. Varun Shankar, an Assistant Professor, have developed a novel method that expedites the training of a specific type of artificial intelligence (AI) system known as Physics-Informed Neural Networks (PINNs). This new technique, referred to as Discretely-Trained PINNs (DT-PINNs), offers promising improvements in training speed and efficiency and has the potential to broaden the applications of PINNs while lowering cost. The work was published at the Conference on Neural Information Processing Systems (NeurIPS) 2022. NeurIPS is a key top-tier event for those in the artificial intelligence field, showcasing research that spans machine learning, computational neuroscience, and more.

Neural networks form the foundation of modern AI technology. Inspired by the human brain, they are comprised by interconnected layers of nodes, or “neurons,” that collaborate to learn from data and make predictions or decisions. PINNs are a unique type of neural network that incorporate principles of physical laws into their structure. PINNs are used in a myriad of fields, including engineering, physics, and meteorology, where they can, for example, help predict structural responses to stress or forecast weather patterns.

Training neural networks is a complex and resource-intensive process. The system needs to adapt the strength of connections between nodes based on input data, a task involving the calculation of many partial derivatives. This is especially challenging for PINNs since the incorporation of exact derivative terms into the training process makes these connections more time-consuming to compute. The DT-PINN method developed by Sharma and Shankar addresses this issue by replacing these exact spatial derivatives (which define how a quantity is changing in space) with highly-accurate approximations calculated using a technique called meshless Radial Basis Function-Finite Differences (RBF-FD). Despite the fact that neural networks are traditionally trained using 32-bit floating point (fp32) operations for the purposes of speed, the University of Utah researchers found that using 64-bit floating point operations (fp64) on the Graphics Processing Unit (GPU) for DT-PINNs leads to faster training times.

“Our work on DT-PINNs represents a substantial progression in the training of PINNs. By improving the training process of PINNs, we have expanded their potential applications and reducing computational costs," Prof. Shankar said. Sharma and Shankar demonstrated the efficiency of DT-PINNs through several experiments, showing that they could train 2-4 times faster on consumer GPUs without compromising on accuracy compared to training via traditional methods. The researchers applied the technique to linear as well as nonlinear spatial problems and demonstrated its applicability to a problem involving space as well as time dimensions.

The results of the research present a significant stride toward more efficient utilization of PINNs across various scientific and technological fields. By reducing the time it takes to train these networks, researchers and practitioners can quickly develop and deploy sophisticated models, aiding our understanding and prediction of complex physical phenomena.